Chris, there is value in increasing “gain” beyond unity. This has been a long term debate over many years, but the reason to use a higher gain has to do with quantization error. Unity gain is FAR from being free of quantization error. The error definitely exists, and can be obvious in a noise plot with low read noise cameras.

Now, with an older camera that has higher read noise, say several electrons worth, the quantization error is generally going to be swamped by the read noise itself. So you won’t notice it, and it does not really matter.

I believe the situation changes when you have under 2e- read noise, as even at unity gain, quantization noise is ~0.3e-, and the error itself is actually quite large, and can be visible in shallow signals. Consider this:

The quantization ERROR is quite obvious at 1e-/ADU, where read noise is only around 1.6-1.8e-. You can see the stepped and chunky nature of the noise in the crop to the right as well. There are only 10 discrete values that each pixel can snap to in that entire crop…and yes, this is at unity! At 0.25e-/ADU the total noise is lower (it is only about 1.2e-), and quantization error is nowhere to be seen. The noise profile in the crop to the right is much more natural, gaussian, clean and random. There are thousands of possible values for the pixels at the high gain. If your goal is to chase FAINT signals, a higher gain is useful. This is particularly true with narrow band imaging, where you may have areas of the frame that have little to no signal.

Another point here is, it takes very little to swamp the noise, including any quantization error (which is already vanishingly small) at 1.2e-. A 5e- signal will do it! With the higher quanization error at unity gain, you would not only need more exposure to deal with the read noise itself, but the ERROR is clearly a non-trivial component of the noise at unity, and you will need enough signal (and thus shot noise) to eliminate the impact of quantization error, which can require additional exposure beyond what may just be necessary to swamp the underlying read noise itself. So, even though unity gain has more dynamic range…you often end up needing to expose more anyway to fully bury the quantization error, which eats into that DR more…

On the other hand, a camera with say 8e- read noise would need a 256e- signal to swamp the read noise. Even if you had higher quantization error with 8e- read noise, by the time you have 250e- of signal, the quantization error is not going to matter. The ultra low noise with newer cameras (and this does not only apply to CMOS, there are some CCDs like the Sony ICX834 that have under 2e- read noise as well) is what changes things here.

There are also real-world practical factors to consider. You can and often will clip stars a bit more at a higher gain, however it is usually not much more, and in practice it does not matter even if you clip a little. I generally am ok with clipping th centroids of the brightest stars. Once I stretch, you can’t tell:

What you can see, though, is the improvement in the background noise profile at the higher gain. There is more quantization error in the low gain image above, despite the fact that the exposures 4x longer. That, IMO, is worth it (especially with CMOS cameras, which often have more FPN and random or semi-random patterns at lower gains.) Here are larger crops that better demonstrate the difference in noise characteristic:

Gain 0 5x12m:

Gain 200 20x3m:

It is not a huge difference in noise profile, but I do prefer the high gain version here. And, as you can see, there is no visible difference in stars here. The shorter exposures largely compensate for the loss of DR. Here are some unstretched crops of individual subs from the above to demonstrate (click the images to see full, the form is chopping off the right side a bit):

Gain 0 12m:

Gain 200 3m:

To be clear here…at Gain 200…only two pixels are clipped. One with several pixels in the center, one only the very central pixel clipped. The rest of the stars, while they are brighter and did become visible, are NOT clipped. The “cost” of lower DR at the higher gain is minimal, and effectively a non-issue in practice, as once you stretch you can’t tell the difference anyway.

If you are chasing faint details, high gains with low quantization error (and also even lower read noise) are useful. Personally, with deeper integrations and much deeper stretching, I much prefer the cleaner and more random background sky noise profile I get with higher gain narrow band imaging, than I do at lower gains. With deep stacking and stretching, lower gains sometimes exhibit more issues from FPN (i.e. banding), which are just not present at higher gains. Unity on the ASI1600 is actually pretty good, but deep integrations will still often reveal some banding. I never have that issue at Gain 200, though (which BTW is just about 2x unity gain, or ~0.5e-/ADU).

It should also be called out clearly here, once you are around 0.5e-/ADU, the bit depth of the camera just doesn’t matter. Lots of CCD cameras have gains ranging from 0.3-0.6e-/ADU. Once you are sampling each electron by about the same, then bit depth is a total non-issue. There IS a loss of DR on lower bit depth cameras, but it usually doesn’t matter in practice, not once the data is stretched.

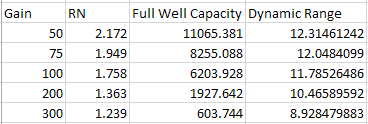

When I first got the ASI1600 in 2016, I did some testing. I had originally guessed that I would find “half unity” at around gain 65-70, and ended up finding it, through basic testing, at gain 76 (7.6dB, or 2e-/ADU). I then guessed that gain 200 would be about “twice unity” or 0.5e-/ADU, and tested and when it came out to 0.48e-/ADU I just went with it.

When I first got the ASI1600 in 2016, I did some testing. I had originally guessed that I would find “half unity” at around gain 65-70, and ended up finding it, through basic testing, at gain 76 (7.6dB, or 2e-/ADU). I then guessed that gain 200 would be about “twice unity” or 0.5e-/ADU, and tested and when it came out to 0.48e-/ADU I just went with it.